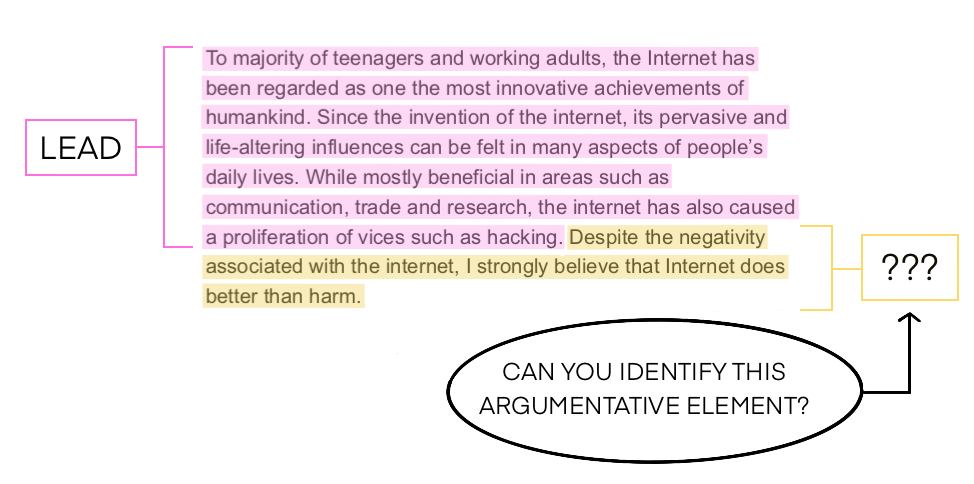

Feedback Prize - Evaluating Student Writing

March 2022 | Kaggle

Finished in the top 3%. Won a silver medal.

Objective was to automatically segment texts and classify argumentative and rhetorical elements into classes: Lead, Position, Claim, Counterclaim, Rebuttal, Evidence, and Concluding Statement, in essays written by 6th-12th grade students.

Jigsaw Multilingual Toxic Comment Classification

June 2020 | Kaggle

Finished in the top 2%. Won a silver medal.

Objective was to predict toxicity for 6 different languages given a dataset consisting of only English comments from the previous two jigsaw competitions. Needing to use TPUs to even have the ability to train massive models and the validation set having only 3 languages to evaluate locally made it really challenging.

Writeup

Quality Forecasting in Cement Manufacturing

Nov 2019 | CrowdAnalytix

Finished 2nd out of 479 participants and awarded a prize money of 2500 USD.

Objective was to develop a robust model to predict Free Lime. What makes this challenge interesting was the small size of the train/test split (~5K/1K rows) which makes it prone to overfitting, presence of several outliers in the dependent variable, and two level evaluation criteria (Mean Absolute Error + R squared) to judge the Private LB.

Report

Pover-T Tests: Predicting Poverty

Feb 2018 | DrivenData

Finished 6th out of 2,310 participants hosted by the World Bank.

Measuring poverty is hard. The World Bank can now build on open source machine learning tools to help predict poverty, optimize uses of survey data, and support work to end extreme poverty in the next decade.

Writeup

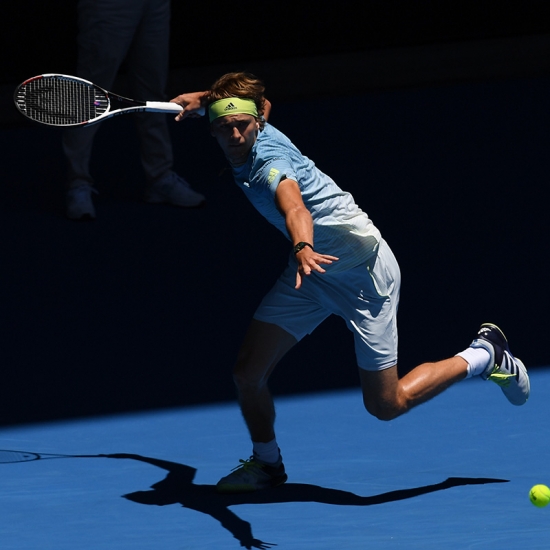

Predicting How Points End in Tennis

Jan 2018 | CrowdAnalytix

Finished 2nd out of 750 participants and awarded a prize money of 2500 USD. Featured in Tennis Australia official press release. See here.

My model achieved an overall accuracy close to 95 percent – 98 percent for winners, 89 percent for forced errors and 95 percent for unforced errors.

Report

Click Prediction Hackathon

Oct 2017 | AnalyticsVidhya

Finished 3rd out of 2975 participants and awarded a prize money of ₹25000.